The demo went great. The model pulled insights from your data that nobody expected. The board saw the presentation, asked a few questions, nodded approvingly. Budget was approved to move forward.

That was six months ago. The AI proof of concept is still sitting in a Jupyter notebook on someone’s laptop. Or maybe it got handed off to engineering, who took one look at it and said, “We can’t deploy this.” Or maybe it technically went live, but nobody trusts the outputs and the team has quietly gone back to spreadsheets.

This is not an unusual story. It is the default outcome for most AI projects.

Industry estimates vary, but the consensus sits somewhere between 80% and 90% of AI proofs of concept never reaching production. That number has not meaningfully improved despite billions of dollars in enterprise AI spending. The technology keeps getting better. The failure rate stays the same.

The reason is straightforward: the hard part was never building the demo. The hard part is everything that comes after.

The Demo Trap

A proof of concept is designed to answer one question: can this work? That is a useful question. But the conditions under which a POC answers “yes” are almost never the conditions under which the solution will need to operate.

POCs succeed because they are built in controlled environments. The data is curated, cleaned, and usually static. The scope is narrow — one use case, one data source, one happy path. There are no integration requirements, no latency constraints, no compliance considerations. The person who built it is the same person running it, so tribal knowledge substitutes for documentation and operational procedures.

This is fine for a proof of concept. That is literally what a proof of concept is for.

The problem is what happens next. The organization sees a working demo and assumes the remaining work is incremental. Just “scale it up.” Just “connect it to the ERP.” Just “make it available to the team.” Each of those “justs” represents months of engineering work and organizational change that was never scoped, never budgeted, and never staffed.

The POC-to-production gap is not a technology problem. It is a planning problem. Most organizations budget 80% for the proof of concept and 20% for productionization. The ratio should be inverted.

We have written before about why AI pilots fail. The POC-to-production gap is the mechanical version of the same issue — the specific technical and organizational barriers that prevent a working prototype from becoming a working system.

The Five Gaps Between POC and Production

When an AI proof of concept stalls on the way to production, it is almost always stuck in one or more of these five gaps. Understanding them is the first step toward avoiding them.

The Data Gap

The POC used a clean dataset. Maybe it was an export from one system, manually reviewed, with known quality. In production, the model needs to consume data from multiple sources in near-real-time, handle missing fields, deal with schema changes, and process volumes that are orders of magnitude larger.

This is the gap we see most often in manufacturing and engineering companies. The AI readiness gap is fundamentally a data gap. The POC sidestepped it by using curated data. Production cannot.

Production-grade AI needs production-grade data pipelines. That means ingestion, validation, transformation, and monitoring — before the data ever reaches the model.

The Integration Gap

The POC lived in isolation. It pulled data from a CSV, ran inference, and displayed results in a notebook or a simple dashboard. Production means plugging into ERP systems, CRM platforms, operational databases, and downstream workflows.

Integration is where technical debt accumulates fastest. APIs need to be built and maintained. Authentication and authorization need to be handled. Data formats need to be negotiated between systems that were never designed to work together. Error handling needs to account for upstream systems going down, pushing bad data, or changing their schema without notice.

For most mid-market companies, integration work represents 40% to 60% of the total effort to move from POC to production. It is rarely in the original project plan.

The Scale Gap

A POC that runs inference on 1,000 records in a batch job is fundamentally different from a production system processing millions of records with sub-second latency. The model architecture might be the same, but everything around it changes.

Scale introduces questions the POC never had to answer. Where does the model run? How do you handle concurrent requests? What happens when the inference service goes down? How do you manage costs as usage grows? What is the latency budget, and what do you do when the model exceeds it?

These are infrastructure questions, not data science questions. They require infrastructure skills that many AI teams do not have.

The People Gap

The POC was built by data scientists or external consultants. They understood the model, the data, and the business context. When something broke, they fixed it because they were sitting right there.

Production systems need operations teams. Someone has to monitor model performance, investigate anomalies, manage retraining pipelines, respond to incidents, and coordinate with the business when outputs drift. These are operational tasks that require a different skill set and organizational structure.

The people gap catches organizations most off guard. They assume the team that built the POC will also run the production system. That team is already on the next project.

The Governance Gap

The POC had no compliance requirements. No audit trail. No access controls. No explainability requirements. No model risk management. Nobody asked who was responsible when the model made a wrong prediction.

In production, all of those questions matter. Regulated industries need audit trails for every prediction. Sensitive data needs access controls and encryption. Models that influence business decisions need explainability — someone needs to answer why the model recommended what it recommended.

Governance is not optional overhead. It is a prerequisite for deploying AI in any context where the outputs affect decisions about real money, real products, or real people.

What Production-Ready AI Actually Requires

Moving from POC to production is not a single step. It is a set of capabilities that need to be built, tested, and maintained. Here is what production-ready AI actually looks like:

Data pipelines that ingest, validate, transform, and deliver data from source systems to the model reliably and on schedule. Not a one-time ETL script. A pipeline with monitoring, alerting, and error handling.

Model serving infrastructure that can handle production traffic patterns, scale up and down with demand, and fail gracefully when something goes wrong. This includes fallback logic — what happens when the model cannot return a prediction? The answer cannot be “the application crashes.”

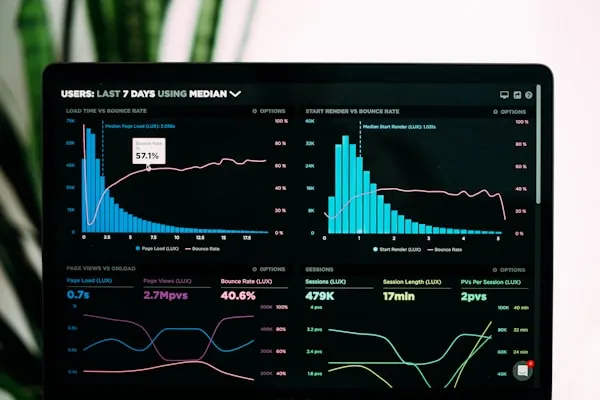

Monitoring and observability across the entire system. Not just uptime monitoring, but data quality monitoring, model performance monitoring, and business outcome monitoring. You need to know when the model’s accuracy is degrading before your users notice.

A retraining cadence that keeps the model current as the underlying data distribution shifts. This means automated evaluation, retraining triggers, validation gates, and a deployment pipeline that can push updated models without downtime.

Human-in-the-loop design for high-stakes decisions. Production AI systems should make it easy for humans to review, override, and provide feedback that improves the model over time.

If your production plan does not include monitoring, retraining, and fallback logic, you do not have a production plan. You have a demo with a deployment date.

The Right Way to Run an AI POC

The best way to close this gap is to design the proof of concept with production in mind from day one. Not building a production system during the POC phase, but making deliberate choices that reduce the distance to production.

Use production-representative data. Not a clean export. Not a sample dataset. Use the actual messy, incomplete, multi-source data that the production system will need to consume. If the model cannot handle that data, you want to know now, not six months from now.

Involve operations teams early. The people who will run the system in production should be in the room during the POC. They ask questions data scientists do not think to ask. How will this be monitored? What is the incident response process? Who gets paged at 2 AM?

Build the evaluation framework during the POC. Define what “good” looks like before you start building. Establish metrics, baselines, and thresholds. This evaluation framework becomes the foundation for production monitoring.

Document integration requirements explicitly. Every system the model connects to, every data source it consumes, every downstream workflow it triggers — document all of it during the POC, even if you are not building those integrations yet.

Estimate the full cost. The POC is 20% to 30% of the total investment. If you are making a go/no-go decision based on POC cost alone, you are working with incomplete information.

What Experienced AI Teams Do Differently

Organizations that consistently get AI into production share a few common patterns.

They budget 30% for POC and 70% for productionization. They know the demo is the easy part. They plan and staff accordingly.

They staff for operations, not just development. Building the model is a project. Running the model is a program. These require different people, different skills, and different organizational structures.

They build the platform before the models. Data pipelines, model serving infrastructure, monitoring — these are shared capabilities every AI project needs. Building them once is dramatically cheaper than rebuilding them for every project.

They treat AI deployments as products, not projects. A project has an end date. A production AI system has a roadmap, a backlog, and a team that owns it indefinitely.

The organizations getting real value from AI are not the ones with the most sophisticated models. They are the ones with the most mature operational capabilities around those models.

Where to Start

If you have an AI proof of concept that worked but has not made it to production, you are not alone. The gap between demo and deployment is real, and closing it requires a different kind of planning than what got you to the demo.

At Ryshe, we help mid-market engineering and manufacturing companies bridge this gap. Sometimes that means building the data foundations that production AI requires. Sometimes it means developing an AI strategy that accounts for the full lifecycle, from POC through production operations.

If you want to talk through where your AI initiative is stuck, reach out to us directly or schedule a session with our AI advisor. We will give you an honest assessment of what it will take to get from where you are to where you need to be.